Breakthrough

High importance

@wildmindai

Importance score: 3 • Posted: February 20, 2026 at 11:35

Score

3

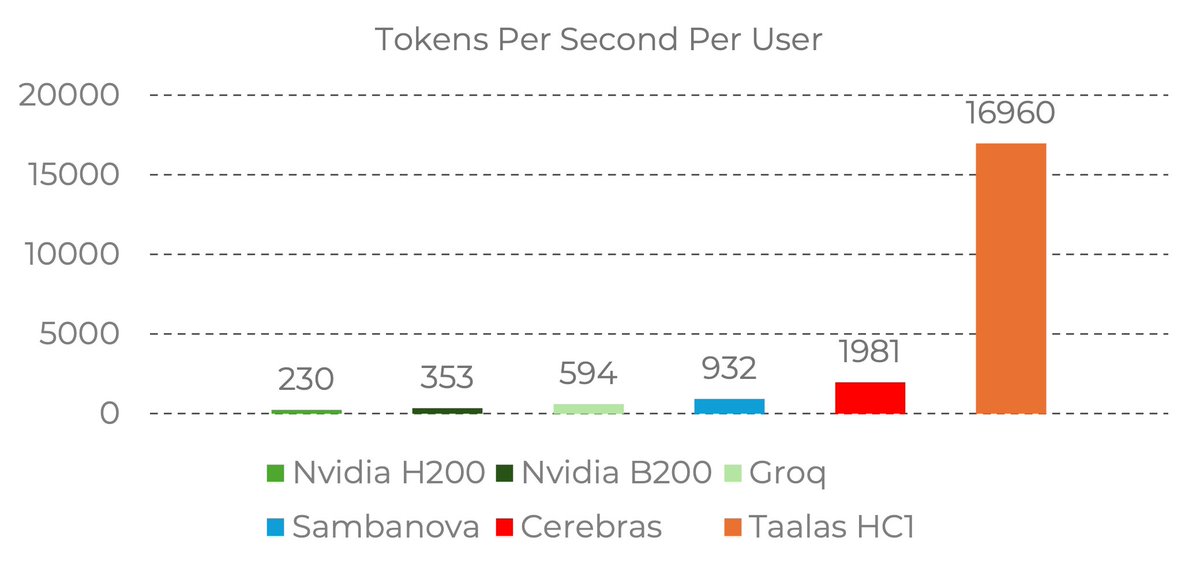

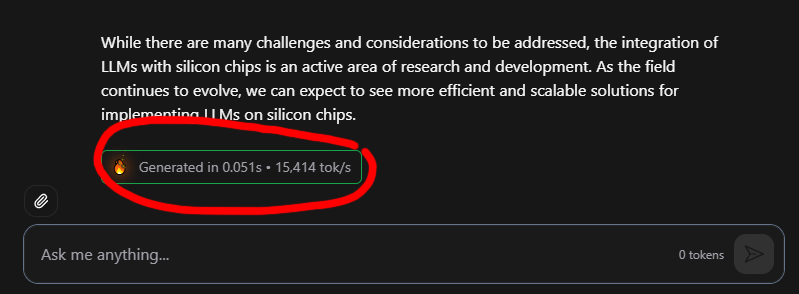

17,000 tokens per second!! Read that again! LLM is hard-wired directly into silicon. no HBM, no liquid cooling, just raw specialized hardware. 10x faster and 20x cheaper than a B200. the "waiting for the LLM to think" era is dead. Code generates at the speed of human thought. Transition from brute-force GPU clusters to actual AI appliances.

Grok reasoning

Highlights major LLM hardware advancement with 17k tps, high engagement 5k+ likes, significant for AI infrastructure.

Likes

5,857

Reposts

691

Views

1,053,220

Tags

not related

artificial intelligence

hardware technology

machine learning

performance metrics

Tweet ID: 2024810128487096357

Prompt source: ai-news

Fetched at: February 21, 2026 at 07:01