Research paper

Medium

@HuggingPapers

Importance score: 4 • Posted: March 29, 2026 at 12:14

Score

4

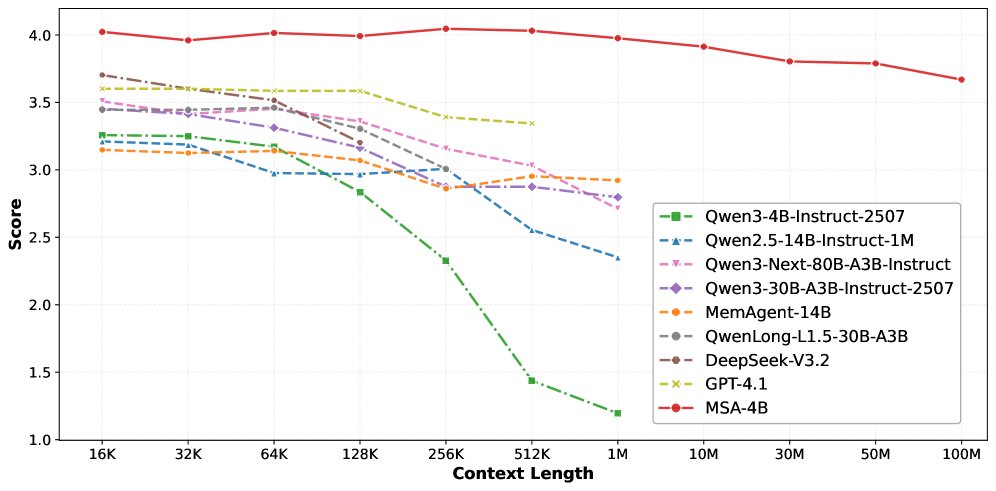

MSA breaks the 100M token barrier Memory Sparse Attention achieves unprecedented 100M token context lengths with near-linear complexity. The architecture maintains 94% accuracy at 1M tokens while outperforming RAG systems and frontier models, using end-to-end sparse attention with document-wise RoPE.

Grok reasoning

Highlights breakthrough in long-context attention outperforming RAG, relevant to foundation models.

Likes

248

Reposts

35

Views

13,060

Tweet ID: 2038228417737277497

Prompt source: ai-news

Fetched at: March 30, 2026 at 06:02