Hasan Toor

@hasantoxr

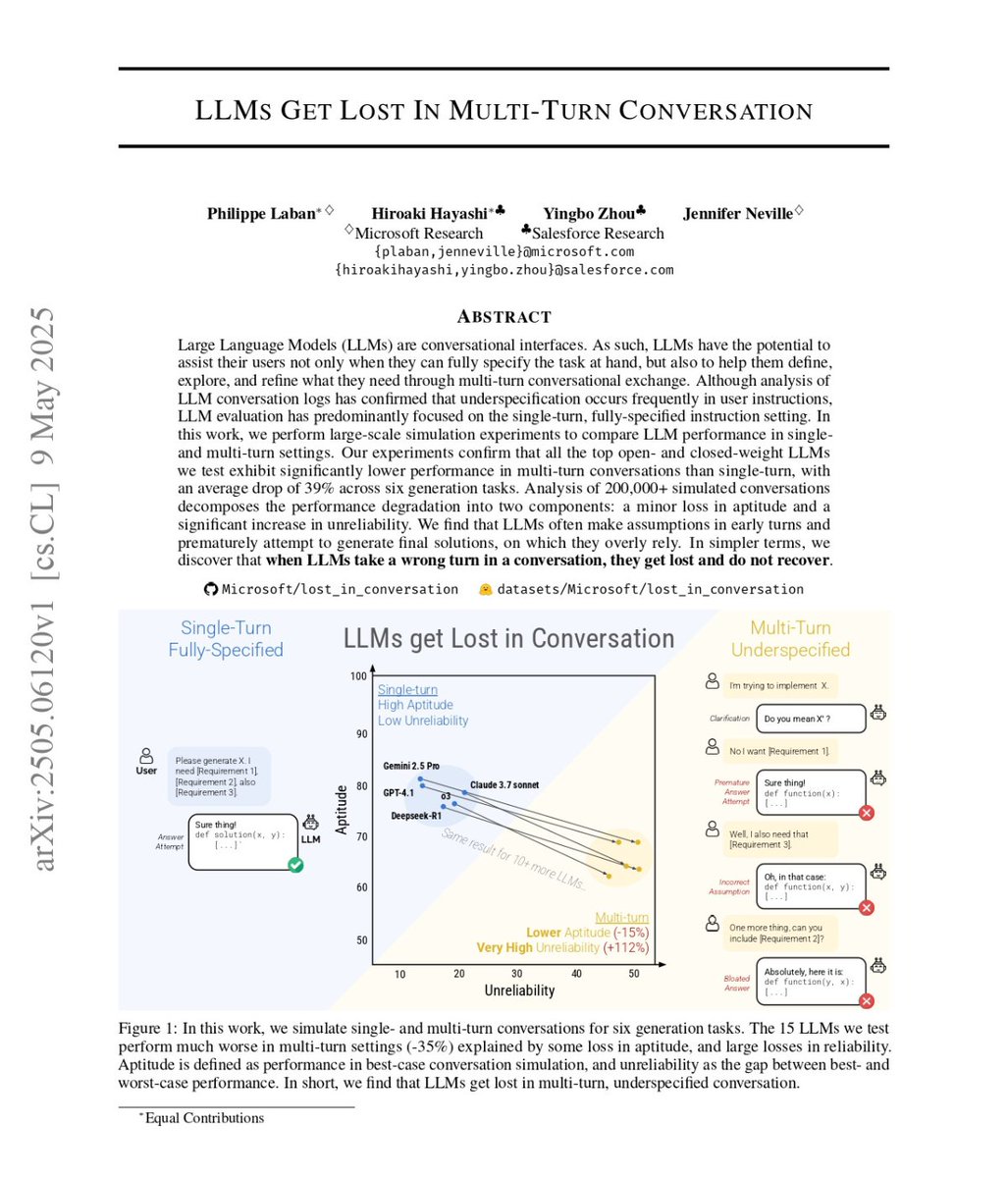

🚨BREAKING: Microsoft Research + Salesforce just dropped a paper that should scare every AI builder. They tested 15 top LLMs GPT-4.1, Gemini 2.5 Pro, Claude 3.7 Sonnet, o3, DeepSeek R1, Llama 4 across 200,000+ simulated conversations. Single-turn prompt: 90% performance. Multi-turn conversation: 65% performance. Same model. Same task. Just... talking normally. The culprit isn't intelligence. Aptitude only dropped 15%. Unreliability EXPLODED by 112%. → LLMs answer before you finish explaining (wrong assumptions get baked in permanently) → They fall in love with their first wrong answer and build on it → They forget the middle of your conversation entirely → Longer responses introduce more assumptions = more errors Even reasoning models failed. o3 and DeepSeek R1 performed just as badly. Extra thinking tokens did nothing. Setting temperature to 0? Still broken. The fix right now: give your AI everything upfront in one message instead of back-and-forth. Every benchmark you've seen was tested on single-turn prompts in perfect lab conditions. Real conversations break every model on the market and nobody's talking about it.