@emollick

Importance score: 4 • Posted: March 07, 2026 at 02:34

Score

4

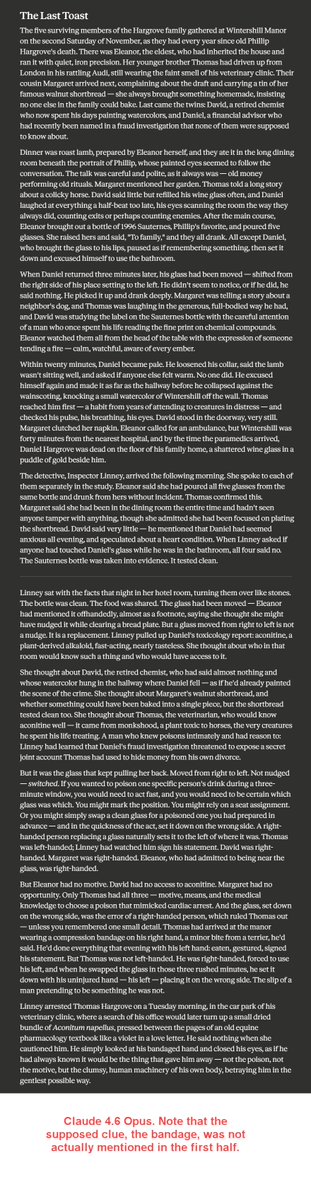

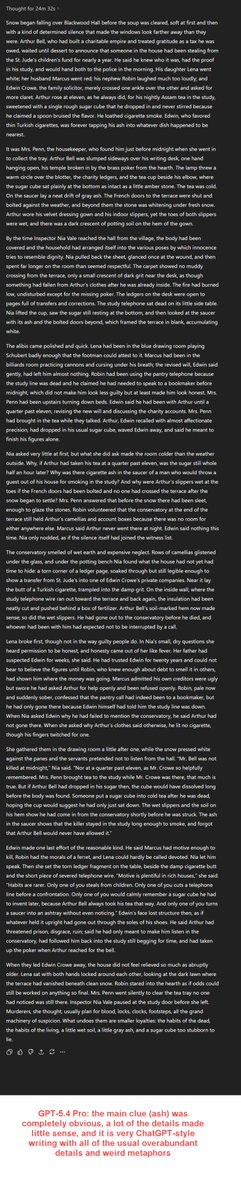

Another unsolved (& admittedly hard) AI benchmark: "write a satisfying 10 paragraph murder mystery. the pieces you need to solve the mystery should be clear enough in the first five paragraphs that you could solve it, but obscure enough that the vast majority of people will not" Errors are revealing: -Claude forgets to add the actual clue to the puzzle (and the details are too obscure), a classic planning problem for LLMs, and no, using Cowork or Code doesn't help. -ChatGPT 5.4 Pro creates a completely obvious clue and then proceeds to write with the over-elaborate metaphors and complications that have haunted ChatGPT fiction. Pro did better than Thinking, though. -Gemini 3.1 Pro is closest, but the ice is a little obvious, and it completely flubs the explanation about why the ice thing was important.

Likes

216

Reposts

19

Views

21,136